В современном мире все больше людей стремятся открыть собственный бизнес. Однако, процесс регистрации индивидуального предпринимательства […]

Тимур Турлов: как работает закон синергии в бизнесе

Основатель Freedom Holding Corp. Тимур Турлов строит уникальную экосистему в Казахстане. В чем секрет успеха […]

Легализация самовольного строительства в Москве: пошаговая инструкция

Самовольное строительство – это возведение объекта без получения необходимых разрешительных документов, что нарушает существующее законодательство. […]

Выбираем Летнюю Резину: На что Обратить Внимание при Покупке 225/60 R17

Летние шины играют ключевую роль в безопасности вождения в теплое время года. Оптимальный выбор размера […]

Оформление кредита под залог квартиры в Астане: Пошаговое руководство

Когда средства необходимы срочно, многие ищут возможности получения кредита под залог имеющегося имущества. В крупных […]

Пакеты для стерилизации оптом: идеальное решение для медицинских учреждений

Пакеты для стерилизации являются неотъемлемой частью работы любого медицинского учреждения, предназначенные для обеспечения стерильности инструментов […]

Эффективное SEO-продвижение: ключевые стратегии для успеха вашего сайта

SEO (Search Engine Optimization), или поисковая оптимизация, представляет собой комплекс мер, нацеленных на улучшение видимости […]

Создание Онлайн-Курсов Под Ключ: Реализация Ваших Идей без Единого Усилия

С развитием цифровых технологий и ростом интереса к дистанционному образованию, услуга по созданию онлайн-курсов «под […]

Недвижимость в Абу-Даби

Абу-Даби, столица Объединенных Арабских Эмиратов, известна своей современной архитектурой, богатой культурой и впечатляющими возможностями для […]

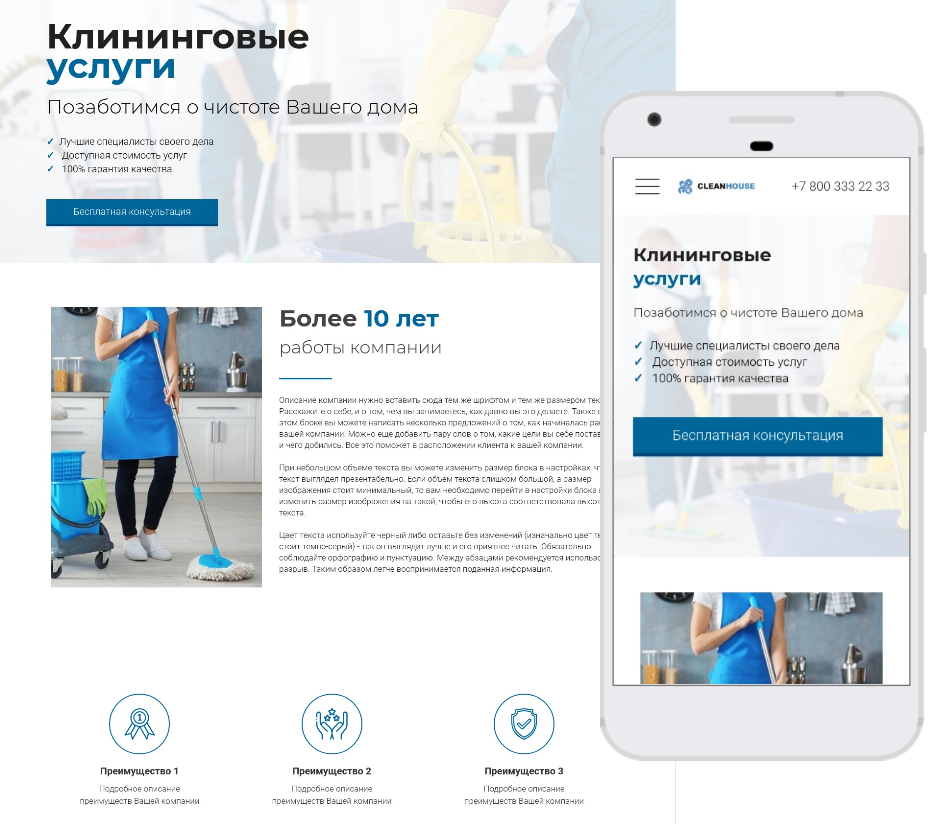

Идеальный шаблон сайта для клининговой компании: Как создать привлекательный и эффективный веб-ресурс для вашего бизнеса

Сайт является важным инструментом для привлечения новых клиентов и установления доверительных отношений с существующими заказчиками […]